文章版权归本人所有,未经同意,请勿转载!

-

前提说明

-

- OS: Ubuntu 18.04 amd64

- ceph版本: Octopus

-

-

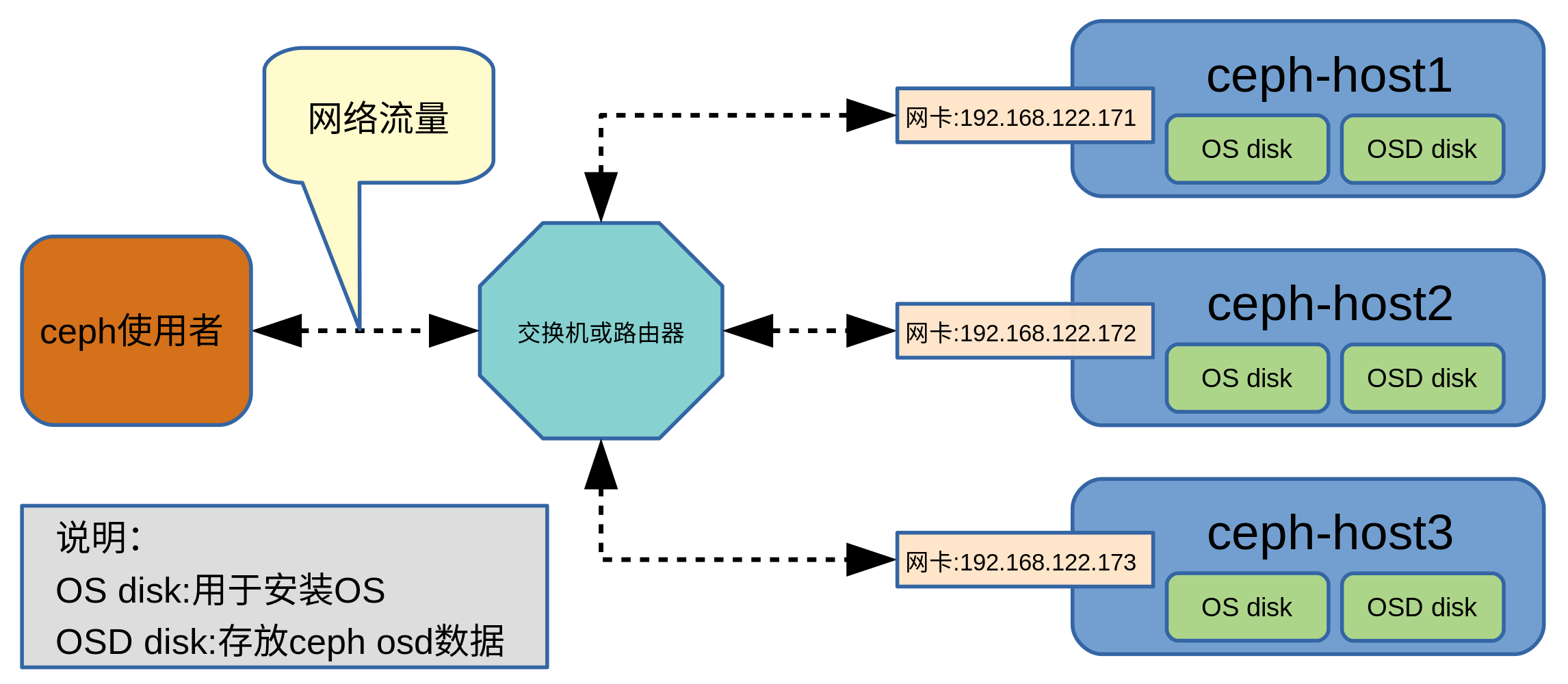

ceph部署架构

-

ceph部署前的准备工作

-

配置主机

配置3台mon主机的hostname及ip

主机 hostname ip 主机1 ceph-host1 192.168.122.171 主机2 ceph-host2 192.168.122.172 主机3 ceph-host3 192.168.122.173 - 修改hostname

- 执行命令

sudo hostnamectl set-hostname new_hostname - 编辑/etc/hosts,修改相关内容:

127.0.1.1 new_hostname

示例:

-

sudo hostnamectl set-hostname ceph-host1- 修改/etc/hosts文件相关内容如下:

127.0.1.1 ceph-host1

- 执行命令

- 修改ip配置文件

- 编辑文件/etc/netplan/01-netcfg.yaml

注:配置文件名有可能变化,根据实际情况操作

- 执行命令

sudo netplan generate - 执行下述命令

注:这条命令会让新配置生效,执行前需要确认新配置生效后主机仍可访问

sudo netplan apply

- 编辑文件/etc/netplan/01-netcfg.yaml

- 修改hostname

-

在3台主机系统中添加ceph软件源

-

执行下述命令添加apt-key

wget -q -O- 'https://download.ceph.com/keys/release.asc' | sudo apt-key add - -

添加ceph Octopus源

sudo apt-add-repository 'deb https://download.ceph.com/debian-octopus/ bionic main'

-

-

在3台主机系统中分别执行下列命令安装相关软件

sudo apt-get update && sudo apt-get install ceph ceph-mds -y

-

-

部署ceph

-

配置bootstrap mon主机

注:选择ceph-host1作为bootstrap mon主机,下述命令都是在ceph-host1中执行

-

执行下述命令生成uuid

uuidgen示例:

test@ceph-host1:~$ uuidgen

11a72113-e0d2-45ee-9be7-217b3f7ac2c6

-

修改配置文件/etc/ceph/ceph.conf

配置文件格式如下:

[global]

fsid = {uuid}

mon initial members = {hostname}

mon host = {ip-address}

public network = {network}

auth cluster required = cephx

auth service required = cephx

auth client required = cephx示例:

[global]

fsid = 11a72113-e0d2-45ee-9be7-217b3f7ac2c6

mon initial members = ceph-host1

mon host = 192.168.122.171

public network = 192.168.122.0/24

auth cluster required = cephx

auth service required = cephx

auth client required = cephx

-

为cluster mon创建keyring

ceph-authtool --create-keyring ~/ceph.mon.keyring --gen-key -n mon. --cap mon 'allow *' -

为administrator创建keyring

sudo ceph-authtool --create-keyring /etc/ceph/ceph.client.admin.keyring --gen-key -n client.admin --cap mon 'allow *' --cap osd 'allow *' --cap mds 'allow *' --cap mgr 'allow *'sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

-

为bootstrap-osd创建keyring

sudo ceph-authtool --create-keyring /etc/ceph/ceph.client.bootstrap-osd.keyring --gen-key -n client.bootstrap-osd --cap mon 'profile bootstrap-osd' --cap mgr 'allow r'sudo chmod 644 /etc/ceph/ceph.client.bootstrap-osd.keyring

-

将client.administartor和bootstrap-osd的key添加到mon.keyring中

sudo ceph-authtool ~/ceph.mon.keyring --import-keyring /etc/ceph/ceph.client.admin.keyringsudo ceph-authtool ~/ceph.mon.keyring --import-keyring /etc/ceph/ceph.client.bootstrap-osd.keyring

-

修改mon.keyring的所属用户和用户组

sudo chown ceph:ceph ~/ceph.mon.keyring -

创建mon map

monmaptool --create --add {hostname1} {ip-address1} --fsid {uuid} ~/monmap示例:

monmaptool --create --add ceph-host1 192.168.122.171 --fsid 11a72113-e0d2-45ee-9be7-217b3f7ac2c6 ~/monmap

-

在mon主机上创建默认的数据文件夹

sudo -u ceph mkdir /var/lib/ceph/mon/ceph-{hostname}示例:

sudo -u ceph mkdir /var/lib/ceph/mon/ceph-ceph-host1

-

将mon守护进程和mon map、keyring关联

sudo -u ceph ceph-mon --mkfs -i {hostname}--monmap~/monmap --keyring ~/ceph.mon.keyring -c /etc/ceph/ceph.conf示例:

sudo -u ceph ceph-mon --mkfs -i ceph-host1 --monmap ~/monmap --keyring ~/ceph.mon.keyring -c /etc/ceph/ceph.conf

-

启动mon

sudo systemctl start ceph-mon@{hostname}sudo systemctl enable ceph-mon@{hostname}

示例:

-

sudo systemctl start ceph-mon@ceph-host1sudo systemctl enable ceph-mon@ceph-host1

-

查看mon状态

sudo ceph -s示例输出:

cluster:

id: 11a72113-e0d2-45ee-9be7-217b3f7ac2c6

health: HEALTH_WARN

mon is allowing insecure global_id reclaim

1 monitors have not enabled msgr2

services:

mon: 1 daemons, quorum ceph-host1 (age 81s)

mgr: no daemons active

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:

-

-

添加mon主机

-

在新mon主机上创建默认的数据文件夹

sudo -u ceph mkdir /var/lib/ceph/mon/ceph-{hostname}示例:

sudo -u ceph mkdir /var/lib/ceph/mon/ceph-ceph-host2

-

在已有的mon主机上创建临时文件夹存储文件(后续文件夹会被删除)

mkdir ~/tmp -

在已有的mon主机上获取keyring

sudo ceph auth get mon. -o ~/tmp/{key-filename}示例:

sudo ceph auth get mon. -o ~/tmp/mon-keyring

-

在已有的mon主机上获取mon map

sudo ceph mon getmap -o ~/tmp/{map-filename}示例:

sudo ceph mon getmap -o ~/tmp/mon-map

-

将已有的mon主机上导出的keyring文件和map文件复制到新的mon主机上,并删除临时文件夹

示例:

-

scp -r ~/tmp test@192.168.122.172:~/rm -rf ~/tmp

-

-

修改已有的mon的/etc/ceph/ceph.conf文件,将新的mon主机添加进去

注:需确保全部已有的mon主机配置文件/etc/ceph/ceph.conf相同

修改后内容示例:

[global]

fsid = 11a72113-e0d2-45ee-9be7-217b3f7ac2c6

mon initial members = ceph-host1,ceph-host2

mon host = 192.168.122.171,192.168.122.172

public network = 192.168.122.0/24

auth cluster required = cephx

auth service required = cephx

auth client required = cephx

-

将已有的mon主机上的/etc/ceph/ceph.conf文件复制到新的mon主机/etc/ceph/目录下

-

在新的mon主机上将mon守护进程和mon map、keyring关联

sudo -u ceph ceph-mon --mkfs -i {new-hostname} --monmap {monmap-file} --keyring {mon-keyring}示例:

sudo -u ceph ceph-mon --mkfs -i ceph-host2 --monmap ~/tmp/mon-map --keyring ~/tmp/mon-keyring

-

将已有mon主机上的bootstrap-osd和client.admin的keyring复制到新的mon机器/etc/ceph/目录下

keyring文件位置示例:

/etc/ceph/ceph.client.bootstrap-osd.keyring

/etc/ceph/ceph.client.admin.keyring

-

在新的mon主机上启动mon

sudo systemctl start ceph-mon@{new-hostname}sudo systemctl enable ceph-mon@{new-hostname}

示例:

-

sudo systemctl start ceph-mon@ceph-host2sudo systemctl enable ceph-mon@ceph-host2

-

在新的mon主机上清理临时文件

rm -r ~/tmp -

重复上述步骤添加其它的mon主机

-

-

在mon机器上配置mgr

-

创建key

sudo ceph auth get-or-create mgr.{hostname} mon 'allow profile mgr' osd 'allow *' mds 'allow *' -o ~/mgr.keyringsudo chown ceph:ceph ~/mgr.keyring

示例:

-

sudo ceph auth get-or-create mgr.ceph-host1 mon 'allow profile mgr' osd 'allow *' mds 'allow *' -o ~/mgr.keyringsudo chown ceph:ceph ~/mgr.keyring

-

创建mgr文件夹

sudo -u ceph mkdir /var/lib/ceph/mgr/ceph-{hostname}示例:

sudo -u ceph mkdir /var/lib/ceph/mgr/ceph-ceph-host1

-

将创建的key文件移动到mgr文件夹下

sudo mv ~/mgr.keyring /var/lib/ceph/mgr/ceph-{hostname}/keyring示例:

sudo mv ~/mgr.keyring /var/lib/ceph/mgr/ceph-ceph-host1/keyring

-

启动mgr

sudo systemctl start ceph-mgr@{hostname}sudo systemctl enable ceph-mgr@{hostname}

示例:

-

sudo systemctl start ceph-mgr@ceph-host1sudo systemctl enable ceph-mgr@ceph-host1

-

查看mgr的状态

sudo ceph -s注:输出中含有 mgr: {hostname}(active, since XXX) 或standbys: {hostname}即为成功

示例输出:

cluster:

id: 11a72113-e0d2-45ee-9be7-217b3f7ac2c6

health: HEALTH_WARN

mons are allowing insecure global_id reclaim

1 monitors have not enabled msgr2

OSD count 0 < osd_pool_default_size 3

services:

mon: 3 daemons, quorum ceph-host1,ceph-host2,ceph-host3 (age 24m)

mgr: ceph-host1(active, since 2m)

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:

-

重复上述步骤在其它mon机器上配置mgr

-

-

在mon机器上添加osd

-

在osd主机上生成uuid

UUID=$(uuidgen)echo ${UUID}

示例:

-

test@ceph-host1:~$ UUID=$(uuidgen)test@ceph-host1:~$ echo ${UUID}

9ea67285-a547-4d1d-bb82-5a9074d24b3b

-

为osd生成cephx key

OSD_SECRET=$(ceph-authtool --gen-print-key)echo ${OSD_SECRET}

示例:

-

test@ceph-host1:~$ OSD_SECRET=$(ceph-authtool --gen-print-key)test@ceph-host1:~$ echo ${OSD_SECRET}

AQBFSnFhEScuBRAAMg90aYiBz8rh1+NadWqfmg==

-

创建OSD

ID=$(echo "{\"cephx_secret\": \"${OSD_SECRET}\"}" |sudo ceph osd new ${UUID} -i - -n client.bootstrap-osd)echo ${ID}

示例:

-

test@ceph-host1:~$ ID=$(echo "{\"cephx_secret\": \"${OSD_SECRET}\"}" |sudo ceph osd new ${UUID} -i - -n client.bootstrap-osd)test@ceph-host1:~$ echo ${ID}

0

-

为osd创建文件夹

sudo mkdir /var/lib/ceph/osd/ceph-${ID} -

将OSD disk整个磁盘分成1个区

sudo fdisk /dev/{disk-name}示例:

-

test@ceph-host1:~$ sudo fdisk /dev/vdbWelcome to fdisk (util-linux 2.31.1).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.Device does not contain a recognized partition table.

Created a new DOS disklabel with disk identifier 0x458c80f3.Command (m for help): n

Partition type

p primary (0 primary, 0 extended, 4 free)

e extended (container for logical partitions)

Select (default p): p

Partition number (1-4, default 1):

First sector (2048-41943039, default 2048):

Last sector, +sectors or +size{K,M,G,T,P} (2048-41943039, default 41943039):Created a new partition 1 of type 'Linux' and of size 20 GiB.Command (m for help): w

The partition table has been altered.

Calling ioctl() to re-read partition table.

Syncing disks.

-

-

修改/etc/ceph/ceph.conf,添加如下内容

[osd.${ID}]

host = {hostname}

bluestore block path = /dev/{disk-name1}示例:

-

[osd.0]

host = ceph-host1

bluestore block path = /dev/vdb1

-

-

将secret保存到osd的keyring文件中

sudo ceph-authtool --create-keyring /var/lib/ceph/osd/ceph-${ID}/keyring --name osd.${ID} --add-key ${OSD_SECRET}示例:

-

test@ceph-host1:~$ sudo ceph-authtool --create-keyring /var/lib/ceph/osd/ceph-${ID}/keyring --name osd.${ID} --add-key ${OSD_SECRET}

creating /var/lib/ceph/osd/ceph-0/keyring

added entity osd.0 auth(key=AQBFSnFhEScuBRAAMg90aYiBz8rh1+NadWqfmg==)

-

-

修改磁盘分区权限

sudo chown ceph:ceph /dev/{disk-partition}示例:

-

sudo chown ceph:ceph /dev/vdb1

-

-

修改osd文件夹的用户组和用户属性

sudo chown -R ceph:ceph /var/lib/ceph/osd/ceph-${ID} -

初始化osd数据文件夹

sudo -u ceph ceph-osd -i ${ID} --mkfs --osd-uuid ${UUID} -

设置开机时修改osd磁盘分区的属组为ceph:ceph

将下述内容写入文件/lib/udev/rules.d/98-udev-ceph.rules

SUBSYSTEM=="block", KERNEL=="{disk-partition}", NAME="{disk-partition}", GROUP="ceph", OWNER="ceph"示例:

-

SUBSYSTEM=="block", KERNEL=="vdb1", NAME="vdb1", GROUP="ceph", OWNER="ceph"

-

-

启动osd service

sudo systemctl start ceph-osd@${ID}

sudo systemctl enable ceph-osd@${ID}

-

同步所有ceph主机,确保文件/etc/ceph/ceph.conf内容相同

-

重复上述步骤,添加其它osd

-

-

在mon机器上添加mds

-

创建data目录

sudo -u ceph mkdir -p /var/lib/ceph/mds/ceph-{hostname}示例:

-

sudo -u ceph mkdir -p /var/lib/ceph/mds/ceph-ceph-host1

-

-

创建keyring

sudo -u ceph ceph-authtool --create-keyring /var/lib/ceph/mds/ceph-{hostname}/keyring --gen-key -n mds.{hostname}示例:

-

sudo -u ceph ceph-authtool --create-keyring /var/lib/ceph/mds/ceph-ceph-host1/keyring --gen-key -n mds.ceph-host1

-

-

导入key并且设置caps

sudo -u ceph ceph auth add mds.{hostname} osd "allow rwx" mds "allow" mon "allow profile mds" -i /var/lib/ceph/mds/ceph-{hostname}/keyring示例:

-

sudo -u ceph ceph auth add mds.ceph-host1 osd "allow rwx" mds "allow" mon "allow profile mds" -i /var/lib/ceph/mds/ceph-ceph-host1/keyring

-

-

在配置文件/etc/ceph/ceph.conf中添加如下内容

[mds.{hostname}]

host = {hostname}示例:

-

[mds.ceph-host1]

host = ceph-host1

-

-

启动mds

sudo systemctl start ceph-mds@{hostname}

sudo systemctl enable ceph-mds@{hostname}示例:

-

sudo systemctl start ceph-mds@ceph-host1

sudo systemctl enable ceph-mds@ceph-host1

-

-

查看mds状态

sudo ceph mds stat示例输出:

-

test@ceph-host1:~$ sudo ceph mds stat

1 up:standby

-

-

同步所有ceph主机,确保文件/etc/ceph/ceph.conf内容相同

-

重复上述步骤,添加其它mds

-

-

创建pool

注:命令中pg-num的计算方法可查看网页https://old.ceph.com/pgcalc/

sudo ceph osd pool create {pool-name} {pg-num}示例:

-

test@ceph-host1:~$ sudo ceph osd pool create pool1 64

pool 'pool1' created

test@ceph-host1:~$ sudo ceph osd pool create pool-data 32

pool 'pool-data' created

test@ceph-host1:~$ sudo ceph osd pool create pool-metadata 4

pool 'pool-metadata' created

test@ceph-host1:~$

-

-

创建file system

-

创建

注:cephfs_name是文件系统名称,cephfs_metadata_pool_name是存储文件系统元数据的池名称,cephfs_data_pool_name是存储文件系统数据的池名称

sudo ceph fs new {cephfs_name} {cephfs_metadata_pool_name} {cephfs_data_pool_name}示例:

-

test@ceph-host1:~$ sudo ceph fs new cephfs1 pool-metadata pool-data

new fs with metadata pool 4 and data pool 3

-

-

查看文件系统

sudo ceph fs ls示例输出:

-

test@ceph-host1:~$ sudo ceph fs ls

name: cephfs1, metadata pool: pool-metadata, data pools: [pool-data ]

-

-

-